Supervisor–Worker Patterns, CrewAI, AutoGen & LangGraph Explained

1. Overview & Why This Matters

Multi-agent systems enable multiple AI agents to collaborate, coordinate, and solve complex tasks through structured communication and shared state.

Production systems at leading AI companies are already multi-agent: coding agents that spawn sub-agents for testing, documentation, and security review; research agents that fan out parallel retrieval workers then synthesise results; telecom AIOps platforms where a fault-detection agent alerts a diagnosis agent which triggers a remediation agent — each specialised, each observable.

For an AI Lead or senior engineer, multi-agent architecture is the primary tool for scaling AI capabilities beyond what any single context window or model can achieve.

| Core question this topic answers: How do you design, coordinate, and productionise networks of AI agents that work together reliably — with controlled state, observable communication, and failure isolation between agents? |

2. Core Architecture Patterns

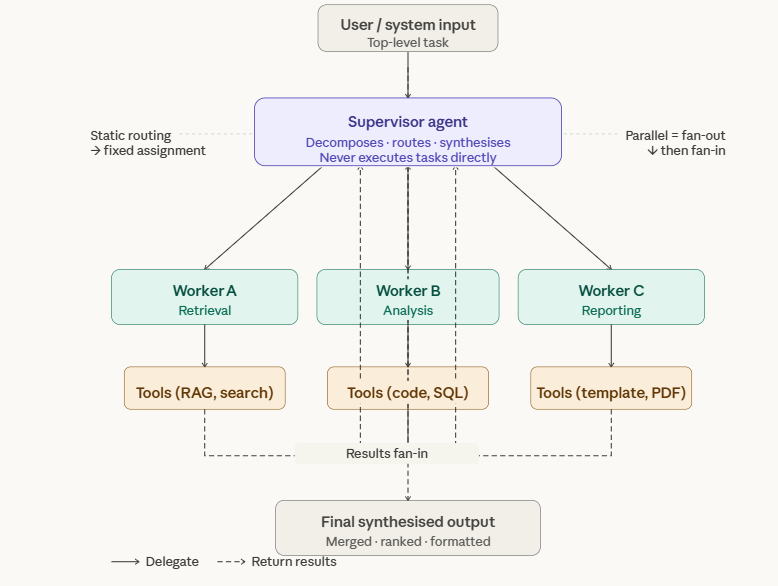

2.1 Supervisor / Worker Pattern

The most widely deployed multi-agent pattern. A supervisor (orchestrator) agent receives the top-level task, decomposes it into sub-tasks, delegates each to a specialised worker agent, collects results, and synthesises the final output. The supervisor never executes tasks directly — it only routes and integrates.

Key design decisions for the supervisor pattern:

- Static vs dynamic routing: Static routing assigns fixed tasks to fixed workers (predictable, auditable). Dynamic routing lets the supervisor decide at runtime which agent to call based on intermediate results (more flexible, harder to debug).

- Sequential vs parallel execution: Workers can run sequentially (each result feeds the next) or in parallel (fan-out then fan-in). Parallel dramatically reduces latency for independent sub-tasks but requires careful result merging.

- Supervisor intelligence level: A dumb supervisor (pure router with hardcoded rules) is predictable. A smart supervisor (LLM-based) is flexible but adds a reasoning cost to every invocation. Match to your task complexity.

2.2 Peer-to-Peer / Collaborative Pattern

Agents communicate directly with each other without a central supervisor. Common in debate, peer-review, and adversarial verification patterns where agents challenge each other’s outputs. More complex to implement but produces higher-quality outputs for ambiguous tasks.

Primary use case: Quality assurance — an agent generates a solution, a critic agent evaluates it, the generator revises, and a judge agent decides when quality is sufficient. This mimics expert peer review.

| Example AutoGen Peer Review Pattern (Telecom Config Validation): Agent 1 — Config Generator: produces candidate 5G NR cell configuration Agent 2 — Validator: checks config against 3GPP TS 38.101 parameter bounds Agent 3 — Critic: identifies performance risks (capacity, interference) Agent 4 — Judge: approves final config or requests another revision cycle Max rounds: 3 | Termination: Judge approval or max_rounds reached |

2.3 Hierarchical Multi-Agent Pattern

A tree of supervisors and workers — supervisors can themselves be workers for a higher-level supervisor. Used for very large task decomposition where a single supervisor would have too many direct reports or too much context to manage effectively.

Example: An enterprise AI platform where a top-level strategy agent delegates to domain supervisors (network, billing, CX) which each manage their own worker pools. Each level only knows about the level directly below it.

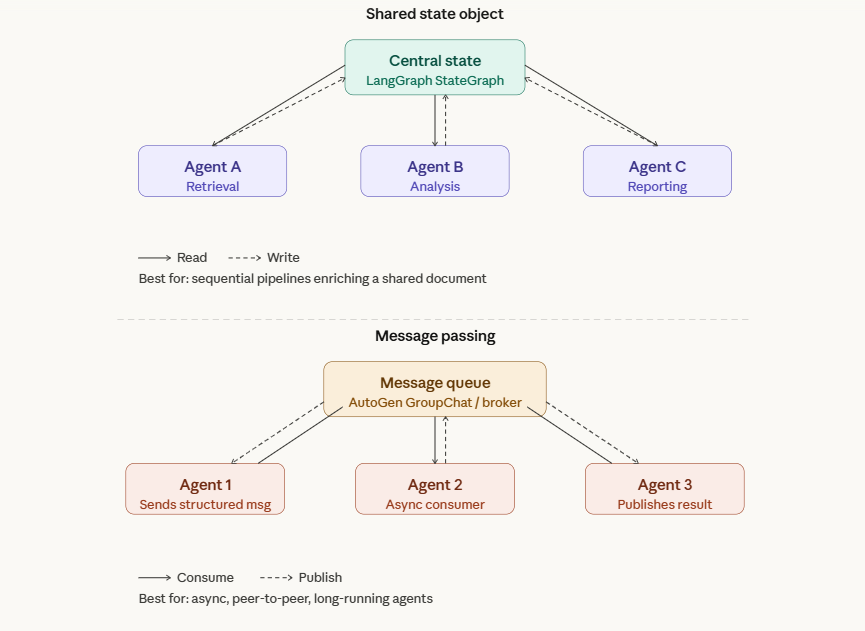

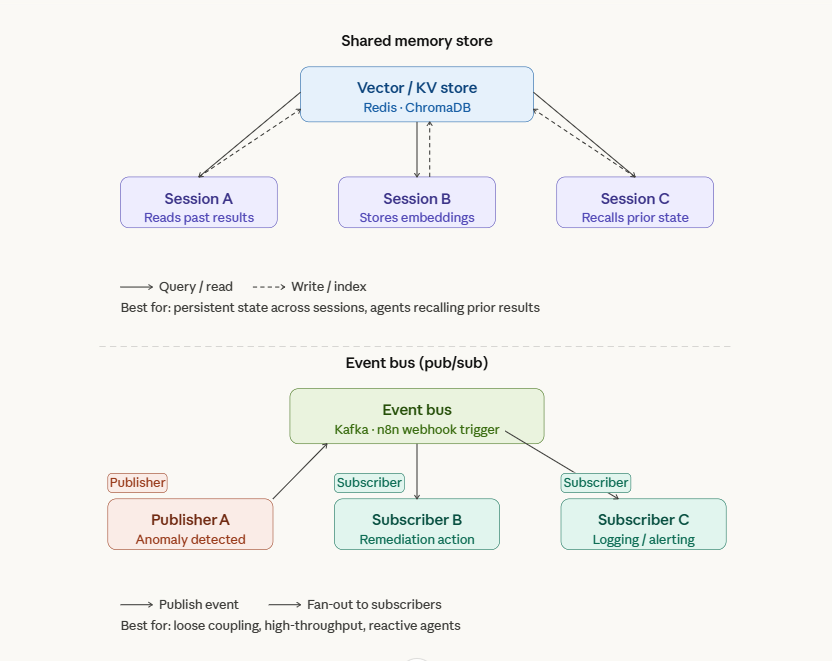

2.4 Shared State & Inter-Agent Messaging

How agents share information is one of the most critical — and most often botched — design decisions in multi-agent systems. Three primary mechanisms:

| Mechanism | How It Works | When to Use |

| Shared State Object | Agents read/write from a central state dict (LangGraph StateGraph) | Sequential pipelines, when each agent enriches the same document/record |

| Message Passing | Agents send structured messages to each other via a queue or broker (AutoGen GroupChat) | Peer-to-peer collaboration, asynchronous workflows, long-running agents |

| Shared Memory Store | Agents read/write to external vector DB or key-value store (Redis, ChromaDB) | Persistent state across sessions, agents that need to recall prior results |

| Event Bus | Agents publish/subscribe to typed events (Kafka, n8n webhook triggers) | Loose coupling, high-throughput, when agents should react to state changes not be directly called |

| Critical Design Rule: Never let agents write to each other’s private state. Define a clear shared state schema upfront with explicit read/write permissions per agent role. Uncontrolled state mutation is the #1 cause of non-deterministic, hard-to-debug multi-agent failures. |

3. Frameworks: Hands-On Comparison

3.1 LangGraph — Graph-Based Agent Orchestration

LangGraph models multi-agent systems as directed graphs where nodes are agents/functions and edges are transitions. State flows through the graph and each node can read and modify it. The best production framework for deterministic, auditable multi-agent workflows.

| # LangGraph supervisor pattern skeleton Example from LangGraph supervisor pattern skeleton from langgraph.graph import StateGraph, END from typing import TypedDict, Literal class AgentState(TypedDict): task: str retrieval_result: str analysis_result: str final_output: str next_agent: str def supervisor_node(state: AgentState) -> AgentState: # LLM decides which agent to call next decision = supervisor_llm.invoke(state[‘task’]) return {**state, ‘next_agent’: decision.next} def route(state) -> Literal[‘retrieval’, ‘analysis’, ‘report’, ‘end‘]: return state[‘next_agent’] graph = StateGraph(AgentState) graph.add_node(‘supervisor’, supervisor_node) graph.add_node(‘retrieval’, retrieval_agent) graph.add_node(‘analysis’, analysis_agent) graph.add_node(‘report’, report_agent) graph.add_conditional_edges(‘supervisor’, route) graph.set_entry_point(‘supervisor’) app = graph.compile() |

3.2 CrewAI — Role-Based Agent Teams

CrewAI abstracts multi-agent systems as crews of agents with defined roles, goals, and backstories. It handles task delegation automatically and supports sequential and hierarchical process modes. Best for rapid prototyping of business-oriented agent teams.

| # Example Crew AI from crewai import Agent, Task, Crew, Process network_analyst = Agent( role=’5G Network Analyst’, goal=’Diagnose root cause of KPI degradation in RAN’, backstory=’Expert in 5G NR, experienced with RAN’, tools=[cell_kpi_tool, alarm_query_tool, neighbor_tool], verbose=True ) report_writer = Agent( role=’Technical Report Writer’, goal=’Produce clear, actionable incident reports’, tools=[document_tool] ) diagnose_task = Task( description=’Analyse cell {cell_id} KPI drop in last 4 hours’, agent=network_analyst ) crew = Crew( agents=[network_analyst, report_writer], tasks=[diagnose_task, report_task], process=Process.sequential ) |

3.3 AutoGen — Conversational Multi-Agent

AutoGen (Microsoft) models agent collaboration as group conversations. Agents are conversation participants that send and receive messages. Supports human-in-the-loop natively and is excellent for peer-review and debate patterns where agents challenge each other.

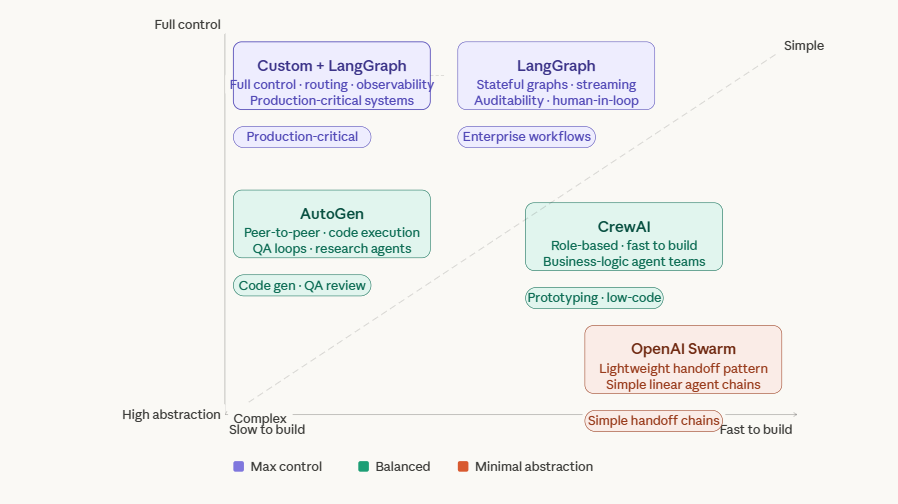

3.4 Framework Selection Matrix

| Framework | Strengths | Best Fit |

| LangGraph | Stateful graphs, full control, streaming, human-in-loop, production-grade | Complex enterprise workflows, when auditability and determinism matter |

| CrewAI | Fast to build, role-based abstraction, good tooling | Prototyping, business-logic agent teams, less engineering-heavy orgs |

| AutoGen | Peer-to-peer conversation, code execution, human-in-loop | Code generation, QA review loops, research agents |

| OpenAI Swarm | Lightweight handoff pattern, minimal abstraction | Simple linear handoff between specialised agents |

| Custom + LangGraph | Full control over routing, state, observability | Production-critical systems where framework abstractions add risk |

4. Inter-Agent Communication & Shared State Design

4.1 Designing the State Schema

The shared state object is the contract between all agents in a multi-agent system. Treat it like a database schema — design it carefully upfront. A poorly designed state schema causes agents to overwrite each other’s work or silently lose information.

| # Production state schema for a telecom AIOps multi-agent system class TelecomAIOpsState(TypedDict): # Input alarm_id: str alarm_details: dict # Detection agent outputs affected_cells: list[str] alarm_severity: str # CRITICAL / MAJOR / MINOR # Diagnosis agent outputs probable_root_cause: str evidence: list[dict] # list of {tool, result, timestamp} confidence_score: float # 0.0-1.0 # Remediation agent outputs recommended_actions: list[str] auto_remediate: bool # Control flow current_agent: str iteration_count: int errors: list[str] # agent errors accumulated human_review_required: bool |

State schema best practices:

- Immutable agent outputs: Once an agent writes its output fields, downstream agents should never overwrite them. Append to lists rather than replacing values.

- Explicit error accumulation: Add an errors list field. Every agent appends its failures here instead of raising exceptions that crash the whole graph.

- Control flow fields: Include current_agent, iteration_count, and human_review_required as first-class state fields, not side-channel signals.

4.2 Human-in-the-Loop Integration

Production multi-agent systems for enterprise use cases must support human review at critical decision points. LangGraph supports interrupt_before and interrupt_after on any node to pause execution and request human input before proceeding.

| Human-in-the-Loop Trigger Points (Telecom Use Case): 1. After diagnosis: if confidence_score < 0.75 → pause for NOC engineer review 2. Before auto-remediation: if action involves cell configuration change → mandatory approval 3. After 3 failed remediation attempts → escalate to human, freeze agent loop 4. If alarm_severity == CRITICAL and auto_remediate == False → immediate human handoff |

5. Common Issues, Failures & Pitfalls

| Failure Mode | Root Cause | Mitigation |

| Agent echo chamber | Agents agree with each other rather than critically evaluating — especially with same base model | Use different temperatures per agent role; add explicit ‘devil’s advocate’ role; use adversarial critique prompts |

| State mutation conflicts | Two parallel agents write to the same state field simultaneously | Strictly partition state write permissions per agent; use append-only patterns for shared fields |

| Runaway agent loops | Supervisor keeps delegating without making progress — no termination condition | Enforce max_iterations at graph level; detect repeated (agent, task) pairs as loop signature |

| Context leakage | Agent A receives sensitive outputs from Agent B that it should not have | Scope each agent’s context to only the state fields it needs; never pass full state to all agents |

| Cascading failures | Agent A failure cascades to B, C, D with no isolation | Implement circuit breakers per agent; errors list in state; failed agents return partial results not exceptions |

| Cost explosion | Multi-agent fan-out with GPT-4o at every node is prohibitively expensive | Use cheap fast models (GPT-4o-mini, Haiku) for routing/simple tasks; expensive models only for complex reasoning nodes |

| Non-deterministic outputs | Same input produces wildly different multi-agent paths | Set temperature=0 for supervisor/routing nodes; add deterministic routing rules for common patterns |

6. Market Landscape & 2025 Trends

6.1 Production Multi-Agent Platforms

| Platform / Tool | Role in Multi-Agent Stack |

| LangGraph Cloud | Managed graph execution, built-in persistence, real-time streaming, deployment API. Production-ready LangGraph hosting. |

| CrewAI Enterprise | Commercial CrewAI with management UI, agent monitoring, role-based access. Good for less technical teams. |

| AutoGen Studio | GUI for designing AutoGen multi-agent conversations. Good for rapid prototyping. |

| AWS Bedrock Multi-Agent | Native multi-agent orchestration on AWS with built-in memory and trace logging. Best for AWS-native stacks. |

| Azure AI Agent Service | Microsoft managed multi-agent with AutoGen integration. Strong enterprise governance. |

| Google Vertex AI Agent Engine | Multi-agent on GCP with Gemini backbone, native integration with BigQuery and Google tools. |

6.2 Recent Emerging Patterns in Multi Agents

- Agent-to-Agent (A2A) Protocol (Google, 2025): Standardised inter-agent communication protocol allowing agents from different frameworks and vendors to communicate. Early adoption but likely to become the REST equivalent for agent communication.

- Model Context Protocol (MCP) for multi-agent: MCP is emerging as the standard for agent tool definitions. Multi-agent systems where every agent discovers tools via MCP enable plug-and-play agent architectures.

- Speculative multi-agent execution: Running multiple agent paths speculatively in parallel, then selecting the best result. Trading compute cost for quality and latency reduction on critical paths.

- Long-running persistent agents: Agents that maintain state across days or weeks using external memory stores. Moving from request-response patterns to always-on autonomous agents with scheduled check-ins.

7. Expert Tips & Quick Reference

| Expert Practitioner Tips 1- Design state schema before agents — the schema is the system contract. Changes later require rewriting every agent that touches that field. 2-Use cheap models for routing and expensive models for reasoning. A GPT-4o supervisor with Haiku workers is 10x cheaper than all GPT-4o and often just as accurate. 3-Every multi-agent system needs a timeout and a fallback. Define what ‘partial success’ looks like — better to return incomplete results than to hang indefinitely. 4-In interviews: differentiate by discussing agent isolation — most candidates describe the happy path. Ask yourself: what happens when Agent B returns an error? What does Agent C receive? 5-For m enterprise: frame multi-agent systems around compliance — who approved which action, which agent made which decision, what evidence was used. Built-in audit trails are non-negotiable. 6-Test each agent in isolation before testing the system. A multi-agent integration test that fails tells you nothing useful — isolated agent unit tests are required. |